by Henry Zumbrun

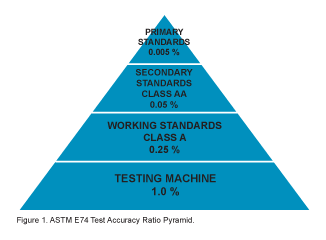

This paper explores the hierarchy, standards, and long-term stability of deadweight primary standard force machines used in load cell calibration. While many understand the basic principles of load cell calibration, fewer fully grasp the distinctions and implications between force standards, such as ASTM International’s E74 Practices for Calibration and Verification for Force-Measuring Instruments (ASTM E74) [1] and the International Standard ISO 376 Metallic materials — Calibration of force-proving instruments used for the verification of uniaxial testing machines (ISO 376) [2]. Despite differing classification and methodology, these standards emphasize the critical role of traceability and uncertainty in force calibration.

Deadweight primary standards represent the highest level of accuracy, achieving expanded uncertainties below 0.002 % of applied force. The paper discusses the construction, materials, and performance characteristics contributing to their exceptional stability. It cites studies by the National Institute of Standards and Technology (NIST) and the United Kingdom’s National Physical Laboratory (NPL), demonstrating negligible mass drift over decades. This paper further outlines the best practices for maintenance, interlaboratory comparisons, and statistical process control, arguing against frequent disassembly of deadweight systems due to the associated risks and costs.

Through historical context, technical evolution, and real-world data, this paper concludes that with proper design, environmental control, adequate maintenance, and verification procedures, deadweight primary standards can maintain their accuracy and traceability for intervals of 20–30 years or more, reinforcing their role as the gold standard in force calibration.